Automation Year in Review: The Shift to "Vibe Ops"

2025 has been a strong and eventful year for the practical application of LLMs. While model capabilities grew, the most interesting developments weren’t just about raw intelligence, but how we harness it to do actual work. The following is a list of personally notable and mildly surprising "paradigm changes" in the automation landscape. These are shifts that altered how we build, debug, and deploy business logic.

1. Vibe Coding for Operations

2025 is the year that automation crossed a capability threshold where we stopped writing scripts and started describing intent. Andrej Karpathy coined the term "vibe coding" to describe writing software simply by using English, "forgetting that the code even exists". For years, RevOps and technical founders were stuck writing rigid Python scripts or wrestling with brittle no-code drag-and-drop builders. That era is effectively over.

We are seeing a shift where the "compiler" for business logic is no longer a strict syntax parser but a probabilistic model. In Midpoint, for example, you simply "describe your workflow" in natural language—like asking for a Gmail chat agent or a lead scoring pipeline—and the system architects the integration. The surprise here isn't that it works, but how quickly it became the default. Writing boilerplate API connectors manually now feels as archaic as writing assembly code for a web app.

2. The Rise of the "App Layer" DAG

For a long time, "AI Agent" meant a chatbot. But in 2025, we finally saw the emergence of a new layer of LLM apps that orchestrate multiple calls into complex Directed Acyclic Graphs (DAGs). This is distinct from a conversation; it is about "context engineering."

Operators are moving away from single-shot prompts toward flows that manage state across tools. We see this in blueprints like the "Daily Email About Today's GCal Meetings," where the system triggers on a schedule, pulls data from Google Calendar, enriches it via OpenAI, and formats a briefing. The LLM isn't just generating text; it is routing traffic between Postgres, Slack, and Email without human intervention. This layer manages the "context engineering" required to string disparate tools into a coherent logic flow, balancing performance and cost.

3. Managing "Jagged Intelligence"

One of the most critical realizations of the last year is that LLM intelligence is "jagged." Models can be genius polymaths one second and fail at basic logic the next. We aren't evolving animals; we are summoning ghosts, and sometimes those ghosts get confused. This creates a massive problem for automation: how do you build reliable pipelines on top of non-deterministic models?

The solution has been the integration of self-healing mechanisms directly into the runtime. Because model performance fluctuates, the infrastructure must be "elastic" and capable of catching errors during execution. We are seeing a move toward systems that don't just fail when the "ghost" makes a mistake, but attempt to fix the error in real-time. Reliability in 2025 isn't about perfect models; it's about robust harnesses that prevent the "jaggies" from breaking production.

4. Efficiency via "Chain-of-Draft"

As inference costs dominate operational expenses (often 70–90% of LLM OPEX), we are seeing a pivot away from verbose "Chain-of-Thought" prompting toward "Chain-of-Draft" (CoD). The industry realized that asking a model to "think step by step" was generating too much expensive fluff.

CoD forces models to be concise, limiting thinking steps to as few as five words, which reduces token usage by up to 75% and latency by over 78% while maintaining accuracy. For high-volume workflows, such as processing thousands of support tickets or scoring leads in real-time, this is a game changer. Platforms like Midpoint are optimizing for the "lowest cost per run" by implementing these efficient prompting strategies under the hood, ensuring that you aren't paying for the model to ramble when you just need a JSON output.

5. Multimodal as the New CLI

Finally, the interface for automation is breaking out of the text box. With models like Mistral’s Voxtral, we are seeing function calling directly from voice input. This allows for workflows that trigger off audio or video—such as a webhook that receives a file, processes it, and triggers a downstream action.

This feels like a return to the early days of computing, but inverted. Instead of humans learning command lines to talk to computers, computers are learning to listen to our messy, multimodal inputs (voice notes, images, Loom videos) and execute precise API calls in response. The friction of "inputting data" is disappearing, replaced by agents that can "hear" a request and execute a complex sales briefing workflow instantly.

TL;DR: The friction of connecting tools is vanishing. We are moving from a world of rigid scripts to one of "vibe ops," where intent is the only programming language required. The tools that win this year will be the ones that make this transition feel invisible. Strap in.

More articles

Where U.K. Businesses Are Really Seeing Value From AI

U.K. enterprises are getting real ROI from AI agents in high-volume workflows. Here’s how to scale means-to-outcomes with AI automation tools.

How to Build Midpoints: A Practical Guide to AI Automation With AI Agents

Learn how to build Midpoints end to end: define triggers, connect tools, use AI agents and LLMs, test, deploy, monitor, and ship fixes fast.

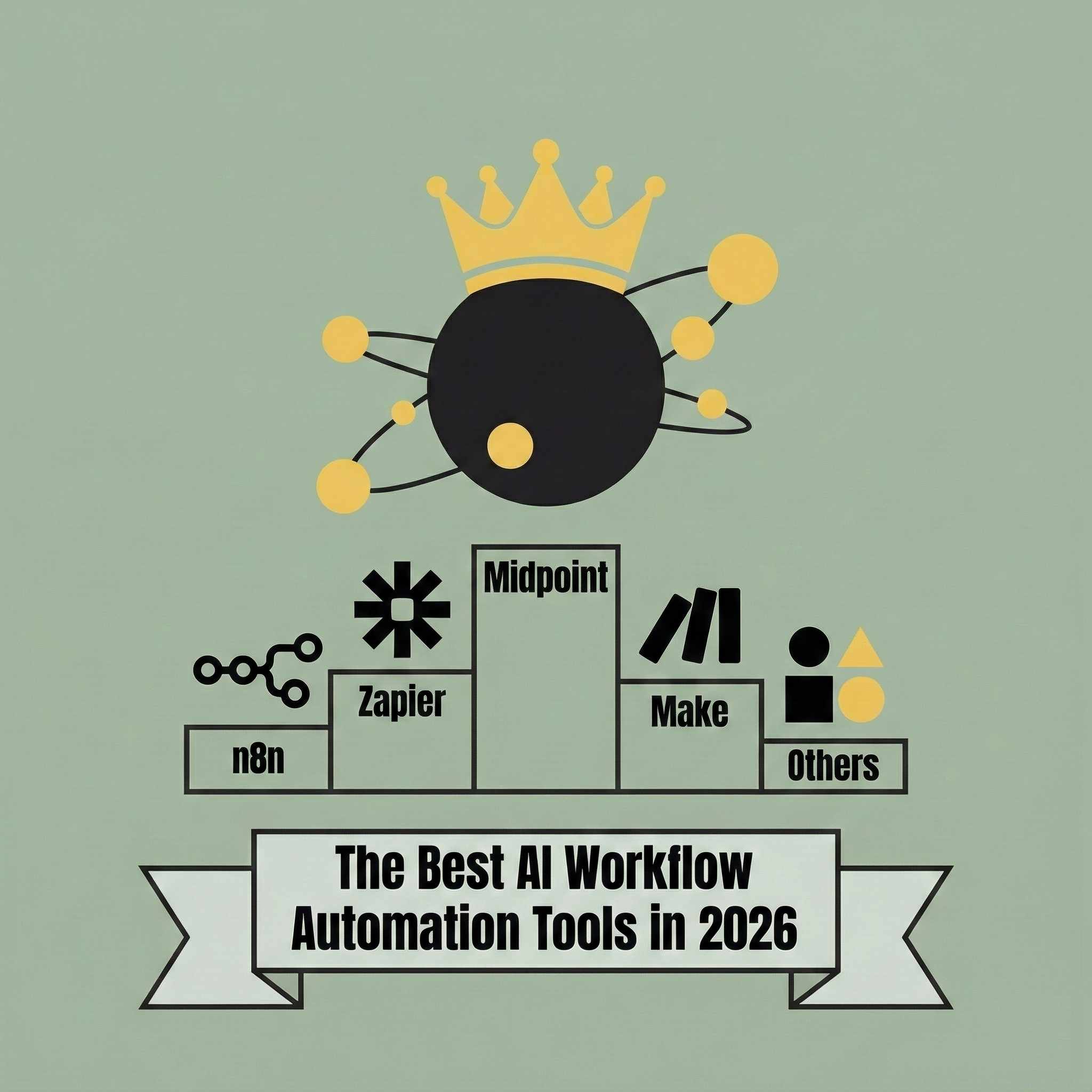

Midpoint Turns Complex Wiring Into Intent

In 2026, the best AI workflow automation tools are defined by whether they can understand intent, build workflows on your behalf, and keep them running without constant manual debugging. This post breaks down the leading platforms and explains why Midpoint stands out by letting you describe the workflow instead of building it yourself.